Traditional IT requirements with edge environments are already creating barriers to efficient and effective long-term deployment

By David Craig, CEO, Iceotope

The edge is fluid, not fixed.

Edge complexities for secure, remote, roadside and even in-building locations are already challenging for traditional air-cooling of IT equipment. The initial deployment must be effective, or the upgrade costs will set back edge capabilities for years to come. Building edge computing at scale is emerging as a key challenge faced by almost every industry.

Edge computing definitions already exist for: Industrial Edge; Consumer Edge; Network Edge; Autonomous Transport Edge; Critical Power and Transport Infrastructure Edge, Security Edge and many others. Each of these has subsets of applications and deployment needs. Each IT network environment will have its own unique requirements. Even within the different sectors mentioned, individual edge data centre networks will vary significantly. Edge is not fixed by sector, use or design.

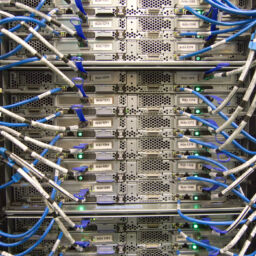

ICT within micro edge data centres will in turn be specified to support a vast array of different workloads and varying criticality levels. However, common to all edge data centre computing is the need to house and protect pre-tested IT server, storage and networking equipment in physical environments which can operate autonomously and reliably for long periods.

Power densities are variable at the fluid edge

IoT and edge are constantly expanding and with it, the volume of data being generated, monitored and transferred between devices, processors and storage. Clearly, edge deployments will not be homogenous. There will be installation differences across industries and even within the same organisation.

These solutions include, but will not be restricted to, differing power density needs (as dictated by the compute density) per module in a given edge roll-out. More electrical power for data processing inevitably means more heat is generated and needs to be removed and consequently greater cooling capacity is required.

Growing evidence indicates that compute, whether at high or low power densities in robust and sealed micro data centre environments, can best be delivered via sealed chassis-level liquid cooling technology.

Uniquely, chassis-level liquid cooling provides reliable operation of ICT infrastructure including component protection, guarantees technical performance and enables significant reductions in the number of required service interventions.

The variety of edge deployments indicate that solutions will not be homogenous. There will be installation differences across industries and even within the same organisation.

High data centre power densities are required for high-performance workloads and data-intensive throughput in sectors such as processing, manufacturing, bio-sciences, as well as communications networks. In fact, with advances in the use of AI software applications, it is hard to imagine a single industry sector where high-performance edge computing will not be required.

At the same time, the intensifying lobby for sustainability in data centres in all their formats will compel industries to invest in low power density but optimise compute within edge data centres.

Fluid Edge – Liquid Protection

Whether at high or low power densities, the need to protect the ICT equipment in new and harsh environments remains a constant vigil.

Air-cooling will not protect IT equipment at the edge. In these non-clean room locations, current air cooling techniques do not segregate delicate electronic circuits and components from gaseous and particulate contaminants or humidity. To meet the economic viability constraints of edge scale up the technology street level containers must have a guaranteed solution to contamination, or these solutions may not correspond to component manufacturers’ warranty conditions.

At the liquid edge, air-cooling can only offer a limited short-term solution and it brings with it a host of complexities and problems, such as the need for regular intrusive maintenance of fans and filters which will require equipment shutdown. As every IT and M&E engineer knows, equipment fails most often when it is being switched off or switched on. A large majority of downtime occurs through human error, usually associated with maintenance.

Even the simple act of servicing components like filters in an air-cooled edge environment introduces a greater risk to the continuity of IT services. A recent ASHRAE TC9.9 report ‘Edge Computing: Considerations for Reliable Operations’ considered servicing and highlighted the additional contamination risk through maintenance access.

Today, deployed edge compute infrastructure units require an average of 2.5kW of power. The data processing, storage and networking workloads expected to be handled at the fluid edge will drive up average power densities. These are expected to rise rapidly to 5kW and then probably continuously higher. In some HPC edge environments, designs are already expanded to 7.5kW-10kW. At the fluid edge, IT and infrastructure will need to scale.

As more data is processed at the edge, more powerful chips will be installed, requiring more power and generating more heat. Only chassis-based liquid cooling can scale to protect vital ICT equipment whilst minimising environmental impact.

Liquid cooling provides highly effective edge data centre cooling in every location from factories to farms, and in all environmental conditions whether in extreme cold or extremely hot conditions, as well as fluctuating climates in snow, rain, wind and heatwaves.

The effectiveness of chassis-level liquid cooling solutions is increased because they precisely target ICT hot spots. The protection comes from directing the cooling through precision pipework within sealed units inside the edge data centre. The ICT is not immersed in liquid but protected and cooled at the server chassis level.

In the fluid edge, ICT equipment is neither exposed to contaminant-bearing cooling air streams nor does the infrastructure require venting for heat removal, providing a vector for physical intrusion either by accidental or malicious intent. It is, in addition, silent in operation, offering no fan noise to cause noise nuisance within residential locations.

Fluid Future

Power requirements, and by extension cooling technology choices, made today for edge compute data centre infrastructure will determine the success of continuous operations and infrastructure protection.

To achieve the long-term benefits and economies of scale of edge requires fresh thinking about the base level infrastructure which will house, power and cool the digital infrastructure in the form of micro data centres on which global companies and their customers will depend.

The fluid edge is not fixed. Chassis-level liquid cooling is the most effective technology solution to meet edge compute scale, ICT protection, security, power and density needs.

At the fluid edge, there will be as many applications as there are industrial and service processes in existence.

The fluid future is already here: Probably the most efficient edge ICT data centre protection and performance is chassis-based liquid cooled.